Lately I’ve been thinking how we could build an alternative for Googles Chromecast and Sonos Systems with Open-Source technology.

The ideal solution would be something that could run on a Raspberry PI or similar device and which would be backwards compatible to the Chromecast API. However, performance could be a problem on such devices.

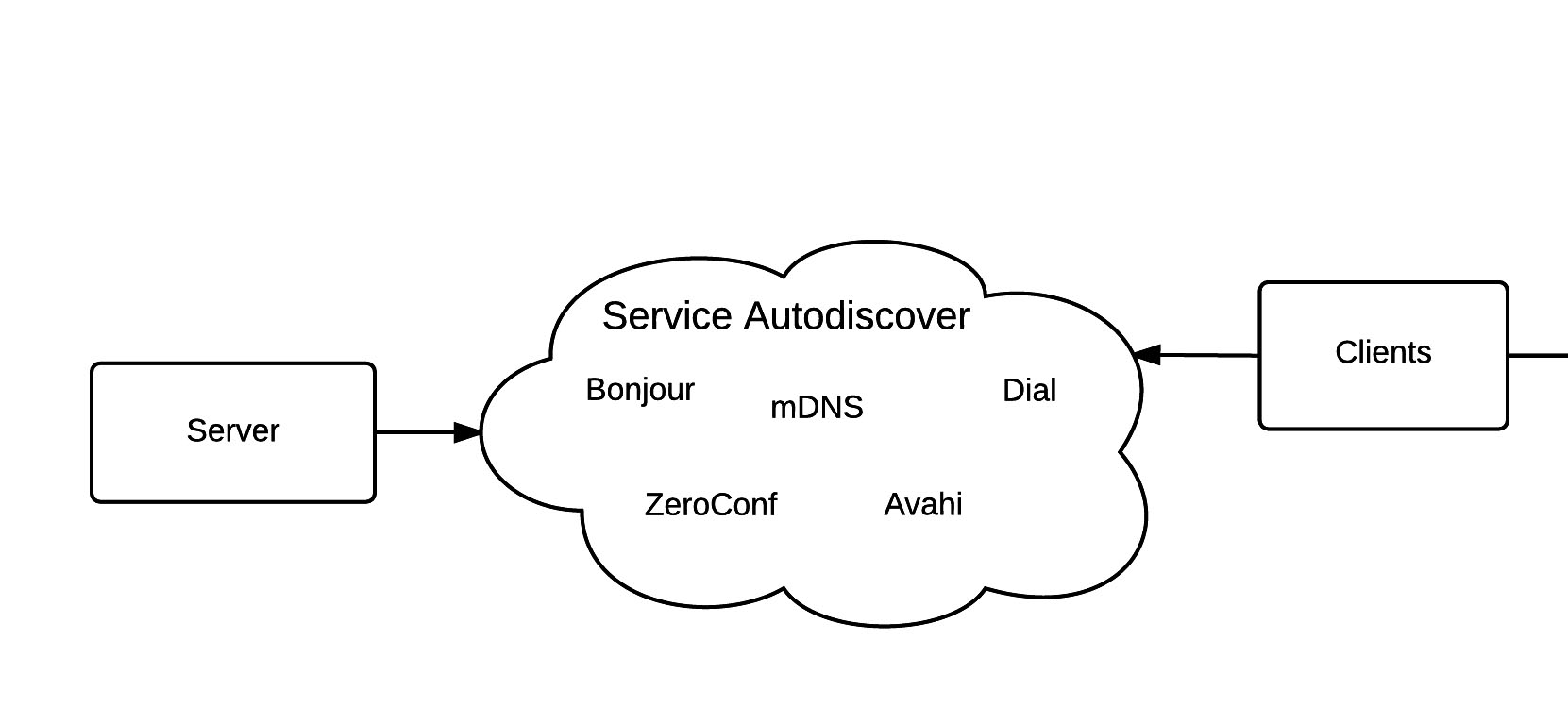

The first problem is to find an Open-Source solution to auto-discover our service from various devices. So we can simply list them in our application without having to supply an IP address.

Service Auto-Discover

To auto-discover the service we could use any of the technologies listed below:

ZeroConf: This is a set of technologies, including one for automatic location of network services such as printing devices.

Bonjour: Bonjour is Apple’s implementation of ZeroConf. It can locate devices such as printers and other computers, and the services running on those devices.

Avahi: This is also a implementation of ZeroConf. It is the standard implementation on free-software operating systems such as GNU/Linux.

Dial: “Discovery And Launch” short DIAL is a protocol developed by Netflix and Youtube. This is the technology Chromecast has used in the past.

mDNS: This is the technology Chromecast is using at the moment. It is a ZeroConf service and is using essentially the same programming interfaces.

The next step here would be to evaluate all those technologies to see which would work best for our scenario.

Media Streaming

For the replacement to be effective it would have to support at least the major video and audio Services for streaming.

From the top off my head this would be the following: Netflix, YouTube, Vimeo, Google Music, Spotify. If you think other services should be added to this list, please leave a comment.

Also, it should be possible to stream local media or media from a network access storage.

For the actual streaming we can use either the individual API for the service or the DLNA standard for streaming of local or remote content.

To distribute the audio for multi-room audio we could use the real-time streaming protocol, so the audio is synced. The hard part here would be to regulate between the delay for the audio control and the syncing of the audio.

Server Interface

The server should be controllable over a command line interface for audio only servers and also have the possibility of displaying the content on a display for video streaming (Netflix, YouTube, Vimeo, etc).

If nothing is streamed we could display either pictures or some kind of looping video like a video of a fireplace.

For servers without real displays it should be possible to display the meta information about the music on a liquid crystal display.

The key ingredient for the interface should be performance. The time it takes for a video from the user clicking on the stream button to when the video is displayed is critical.

Clients

To compete with the Chromecast there should at least exists a browser extension and an Android and IPhone application.

Everything should be built modular, so we can add and remove support for services without changing anything under the hood. The system would only offer a base layer on which the services can build on.

This would mean that a client can only support some services but connect to a server who also provides more services than the client.

Further progress and current status

I’d really like to get some feedback on this article. It may be that this is complete gibberish. So if you have any questions or ideas feel free to comment or contact me directly.

This is at the moment only a concept, but if some people are also interested in working on this or have experience with any of those topics don’t hesitate to contact me.

Twitter: @robinglauser

Google+: robinglauser

Github: nahakiole

E-Mail: robin.glauser@gmail.com

Hey Martin, I haven’t thought about this project for a while, at the moment I have Chromecast Audios all over the house to listen to music, but they aren’t perfect and sometimes don’t work. Are you working on a similar project or do you have ideas for something similar?

Are you still thinking about this?